Artifical Intelligence (AI) is at an inflection point of adoption in the credentialing and testing industry. In this article we will take a closer look at how AI is implemented in exam development and administration as well as how “Ethical AI” can be a guiding principle to adopt this technology without biasing testing or compromising the integrity of validity of examination.

What does “Ethical AI” mean?

In the examination development and testing industry, “Ethical AI” refers to the implementation of AI technology while maintaining integrity, fairness, and trust in the assessment process. Adhering to ethical guidelines helps build confidence in AI-enhanced systems and ensures that they serve the intended purpose without causing harm or introducing bias.

How will AI be adopted in the testing industry?

There are several ways in which Artificial Intelligence (AI) is already being used to enhance the processes and systems of test development and delivery. Here are a few examples:

- Test Development: AI can be used to assist in item writing, allowing SMEs to apply their knowledge to editing AI-generated content.

- Test Integrity: AI can be used to monitor live test sessions and analyze video recordings of remote proctored exam sessions to enhance proctors’ ability to identify bad actors.

- Exam Maintenence: AI can automate routine exam maintenance functions, shifting the maintenance role of SMEs from primary reviewers to evaluators of AI recommendations.

As the development of AI technologies continues to advance, it is expected that AI will also be applied and adopted to a broader array of applications in the testing industry.

To learn more about how AI can be implemented to advance the way certification programs are developed and administered, read this recent article: 4 Ways AI Can Be Used in Credentialing.

What are the ethical considerations?

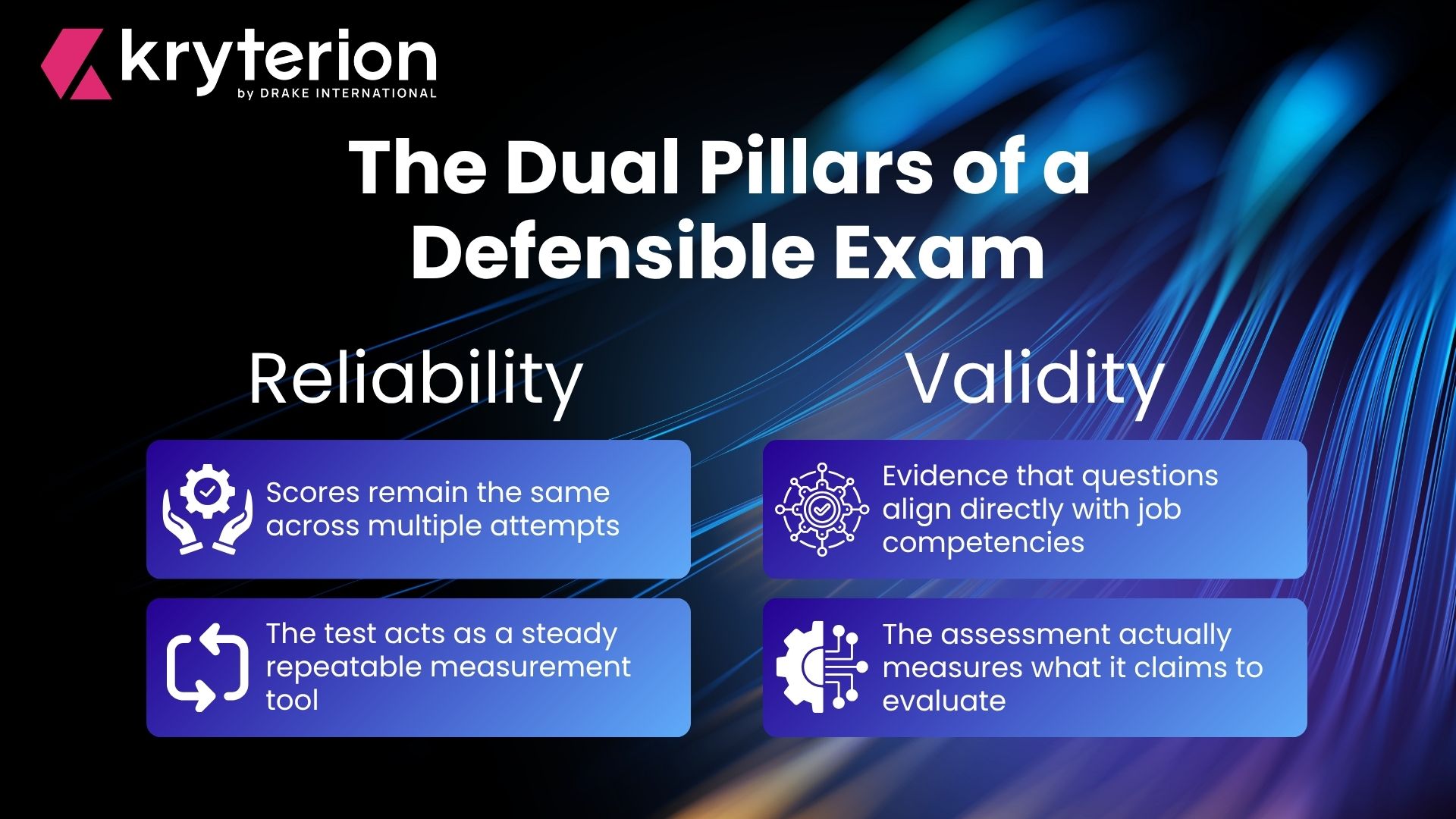

The Association of Test Publishers (ATP) released a set of AI principles in January 2022. ATP’s AI guidance consists of the following principles Transparency, Human-In-The-Loop, Balanced Utilization, and finally, Fair and Unbiased.

Transparency: AI systems should be transparent and understandable, so stakeholders (test takers, educators, administrators) can comprehend how decisions are made. This involves providing explanations for AI-driven decisions and ensuring transparency in the processes..

Human-In-The-Loop: This principle ensures that the application of AI to test processes such as development, administration, and scoring doesn’t replace trained individuals in decision-making. AI can provide insights and suggestions but shouldn’t autonomously reach conclusions. Human involvement is essential at key stages to challenge inaccuracies, maintain fairness, and prevent bias or discrimination in the testing process.

Balanced Utilization: Balanced utilization of AI in testing involves the sponsor’s careful consideration of options to offset potential biases such as allowing participants to opt-out or providing alternative testing methods. It also entails a critical assessment of AI techniques reliant on test candidates’ personal data to ensure fairness. By carefully examining these factors and implementing balancing mechanisms, testing organizations can effectively evaluate and manage the risks associated with AI usage in testing procedures.

Fair and Unbiased: AI algorithms used in exams or certifications should not exhibit biases against certain groups or individuals based on race, gender, ethnicity, or other characteristics. Steps are taken to identify and rectify biases in the data and algorithms.

AI regulations to consider

With the increased adoption and application of AI technologies, countries and organizing bodies are introducing regulations to mitigate risks.

For example, the EU Commission issued The EU Artificial Intelligence Act, a set of proposed regulations for AI systems. The proposed regulations categorize AI systems into 3 categories based on their potential risk to individuals and society. High-risk AI systems (such as those used in critical infrastructure, law enforcement, and healthcare) are subjected to more stringent regulations.

In the United States, a Blueprint for an AI Bill of Rights has been proposed. Outlined in this proposed set of AI principles, the White House Office of Sciences and Technology “has identified five principles that should guide the design, use, and deployment of automated systems to protect the American public in the age of artificial intelligence.”

While these types of AI regulations are developing, it is important to be aware of how formal regulations may impact businesses and industries.

Kryterion’s approach to responsible AI

At Kryterion, we clearly see the opportunities and disruption that AI is bringing to our industry. Our top priority is to provide clients with the best mix of security, innovation, service, and value in our tools for test development and delivery. AI is quickly becoming an integral technology in our products and direction. The path we are on with AI encompasses the immediate benefits it provides while maintaining a sharp awareness of its evolution allowing us to ensure our products continue to meet or exceed the needs of our clients.

Please contact us to set up a meeting so we can further discuss how important AI is to our future and our clients.

Want to learn more about AI in certification?

Learn more about AI in the certification and testing industry with these resources from Kryterion: